Problem

The premise is almost the same as in this question. I'll restate for convenience.

Let $A$, $B$, $C$ be independent random variables uniformly distributed between $(-1,+1)$. What is the probability that the polynomial $Ax^2+Bx+C$ has real roots?

Note: The distribution is now $-1$ to $+1$ instead of $0$ to $1$.

My Attempt

Preparation

When the coefficients are sampled from $\mathcal{U}(0,1)$, the probability for the discriminant to be non-negative that is, $P(B^2-4AC\geq0) \approx 25.4\% $. This value can be obtained theoretically as well as experimentally. The link I shared above to the older question has several good answers discussing both approaches.

Changing the sampling interval to $(-1, +1)$ makes things a bit difficult from the theoretical perspective. Experimentally, it is rather simple. This is the code I wrote to simulate the experiment for $\mathcal{U}(0,1)$. Changing it from (0, theta) to (-1, +1) gives me an average probability of $62.7\%$ with a standard deviation of $0.3\%$

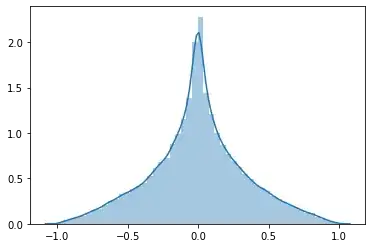

I plotted the simulated PDF and CDF. In that order, they are:

So I'm aiming to find a CDF that looks like the second image.

Theoretical Approach

The approach that I find easy to understand is outlined in this answer. Proceeding in a similar manner, we have

$$ f_A(a) = \begin{cases} \frac{1}{2}, &-1\leq a\leq+1\\ 0, &\text{ otherwise} \end{cases} $$

The PDFs are similar for $B$ and $C$.

The CDF for $A$ is

$$ F_A(a) = \begin{cases} \frac{a + 1}{2}, &-1\leq a\geq +1\\ 0,&a<-1\\ 1,&a>+1 \end{cases} $$

Let us assume $X=AC$. I proceed to calculate the CDF for $X$ (for $x>0$) as:

$$ \begin{align} F_X(x) &= P(X\leq x)\\ &= P(AC\leq x)\\ &= \int_{c=-1}^{+1}P(Ac\leq x)f_C(c)dc\\ &= \frac{1}{2}\left(\int_{c=-1}^{+1}P(Ac\leq x)dc\right)\\ &= \frac{1}{2}\left(\int_{c=-1}^{+1}P\left(A\leq \frac{x}{c}\right)dc\right)\\ \end{align} $$

We take a quick detour to make some observations. First, when $0<c<x$, we have $\frac{x}{c}>1$. Similarly, $-x<c<0$ implies $\frac{x}{c}<-1$. Also, $A$ is constrained to the interval $[-1, +1]$. Also, we're only interested when $x\geq 0$ because $B^2\geq 0$.

Continuing, the calculation

$$ \begin{align} F_X(x) &= \frac{1}{2}\left(\int_{c=-1}^{+1}P\left(A\leq \frac{x}{c}\right)dc\right)\\ &= \frac{1}{2}\left(\int_{c=-1}^{-x}P\left(A\leq \frac{x}{c}\right)dc + \int_{c=-x}^{0}P\left(A\leq \frac{x}{c}\right)dc + \int_{c=0}^{x}P\left(A\leq \frac{x}{c}\right)dc + \int_{c=x}^{+1}P\left(A\leq \frac{x}{c}\right)dc\right)\\ &= \frac{1}{2}\left(\int_{c=-1}^{-x}P\left(A\leq \frac{x}{c}\right)dc + 0 + 1 + \int_{c=x}^{+1}P\left(A\leq \frac{x}{c}\right)dc\right)\\ &= \frac{1}{2}\left(\int_{c=-1}^{-x}\frac{x+c}{2c}dc + 0 + 1 + \int_{c=x}^{+1}\frac{x+c}{2c}dc\right)\\ &= \frac{1}{2}\left(\frac{1}{2}(-x+x(\log(-x)-\log(-1)+1) + 0 + 1 + \frac{1}{2}(-x+x(-\log(x)-\log(1)+1)\right)\\ &= \frac{1}{2}\left(2 + \frac{1}{2}(-x+x(\log(x)) -x + x(-\log(x))\right)\\ &= 1 - x \end{align} $$

I don't think this is correct.

My Specific Questions

- What mistake am I making? Can I even obtain the CDF through integration?

- Is there an easier way? I used this approach because I was able to understand it well. There are shorter approaches possible (as is evident with the $\mathcal{U}(0,1)$ case) but perhaps I need to read more before I can comprehend them. Any pointers in the right direction would be helpful.