Update: a full solution to the recursion below has now been found, and it is discussed here.

If $z_n < 2y_n$ then

- $y_{n+1} = 4y_n - 2z_n$

- $z_{n+1} = 2z_n + 3$

Else

- $y_{n+1} = 4y_n$

- $z_{n+1} = 2 z_n - 1$

Consider the following limit:

$$\lim_{n\rightarrow\infty} \frac{1}{n}\left(z_{n+1} - \sum_{k=1}^n z_k\right) \tag{$\star$}$$

The limit may or may not exist, and it may or may not depend on the initial conditions. Let us assume that the initial values $y_1$ and $z_1$ are strictly positive integers.

Question: If $y_1 \neq 2 z_1$ and $2y_1 \neq z_1$, is it true that $(\star)$ is always $1$, and otherwise the limit is always $3$?

Now let's the fun begin...

Let $d_n=\frac14(z_n-2z_{n-1}+1)$. The sequence $d_n$ represents the binary digits of some unknown number $x$, a number that depends on the initial conditions. It turns out that if $y_1=2, z_1=5$ then that number is $x=\sqrt{2}$. You may ask: so what?

Here is where it becomes fascinating:

If you answer my question about the limit converging to $1$, say for $y_1=2, z_1=5$, then you proved that exactly 50% of the binary digits of $\sqrt{2}$ are zero.

An article about this recursion (with connection to the digits distribution) can be found here. An application to the gaming industry can be found in section 2.1 in the following article. The source code I used in my computations is accessible here (Perl code using the Bignum library for exact arithmetic.) I can produce a large number of $z_n$ on request, for those interested and not familiar with high precision computing.

Hints and expectations

The case with the limit converging towards $1$, I call it the standard case. At this point, the result about the limit is still a conjecture. It is based on the fact that for a number such as $\sqrt{2}$, the distribution of the binary digits is believed to be uniform on $\{0,1\}$. Generally speaking, the limit is equal to $4p-1$ where $p$ is the proportion of binary digits equal to one in the number $x$ in question: this fact is easy to prove, almost trivial, as the formula in the limit was built with that goal in mind.

Also note that in the standard case, you can never have $y_n = 2z_n$ or $2y_n = z_n$ at any iteration $n$, otherwise, it would force the convergence towards $3$ according to this conjecture, and imply that $x$ is rational. Also, if the limit, rather than being $1$ was (say) $1.2$, it would imply that 55% of the binary digits of $\sqrt{2}$ are equal to one. Even proving (in the standard case) that the limit is strictly above $-1$ or strictly below $3$ would be a spectacular discovery: it would imply that the proportion of digits of $\sqrt{2}$ equal to zero is strictly positive (that is, it does not tend to zero as you look at longer and longer sequences of successive digits.) To this day, whether this fact is true or not remains a mystery.

I don't know if proving my conjecture is easy, extremely difficult, or impossible to prove or disprove. But I will accept any answer that brings some light to help answer my question, even if it is just a reference to relevant, very interesting previous work. Also, insights about the case where $y_1$ or $z_1$ are not integers, are welcome: for the few cases I tested so far, the limit was equal to $1$, unless $y_1=2z_1$ or $2y_1 = z_1$, but convergence can be slow when we are close to $y_1=2z_1$ or $2y_1 = z_1$.

Below is a chart showing convergence to $1$ (relatively slow, of the order $1/\sqrt{n}$ just like for the central limit theorem - not a coincidence - and chaotic) using $y_1=2, z_1=5$. The X-axis represents $n$, the number of iterations. The convergence is much faster when the limit is $3$.

Suggested path to solve this problem

One way to handle this problem is to consider the sequence $d_n$ of digits of $x$ as a realization of an ergodic stochastic process. Such processes have an equilibrium distribution, called attractor in chaos theory, even though the dynamical system considered here is entirely deterministic. See my article on the theory of randomness, here, and my book Applied Stochastic Processes, Chaos Modeling, and Probabilistic Properties of Numeration Systems, here. The equilibrium distribution is solution to a stochastic integral equation. Here we are dealing with discrete values, and with a discrete stochastic equation that seems to only have two solutions (the standard case, and the other one.) Assume that the probability (at any iteration $n$) for $d_n$ to be equal to one, is $p$. At equilibrium, the probability for $d_{n+1}$ to be equal to one, must also be $p$. This yields a stochastic equation, based on the recurrence system mentioned in the introduction, and the only unknown is $p$. In order for the equilibrium to hold (that is, solving this equation with respect to $p$) must yield $p=1/2$ in the standard case.

Update: One thing that could also help is looking at what happens with non-integer initial values. For instance, $y_1 \rightarrow 2, z_1 \rightarrow 5$. I did notice during this experiment that $y_1=1.2, z=5.3$ leads to the non standard case, with the limit converging to $3$ and $x$ being a rational number. So the definition of the standard case needs some refinement (at least to handle non-integer initial values) and examples leading to the non-standard case (though rare) may be more numerous than initially thought.

Another interesting chart

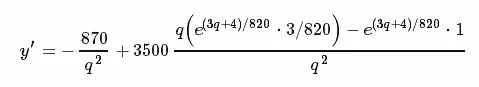

Here I try to build a second order approximation for the limit. Let

$$L(n)= \frac{1}{n}\left(z_{n+1} - \sum_{k=1}^n z_k\right) \tag{$\star$}$$

I am interested in the error $E(n) = \sqrt{n}\cdot \Big(L(n)-1\Big)$. In a traditional problem, you would expect $E(n)$ to tend to a constant as $n \rightarrow\infty$. Not here, the error behaves like a Brownian motion, again, just like in the central limit theorem. Yet the system is fully deterministic here. Note that I used $y(1)=2, z(1)=5$ to produce the chart below.

While I did not test it, I would expect that the same Brownian behavior occurs regardless of the initial conditions, as long as we are dealing with the standard case.

Also, if you look closely at the above chart, I believe it is NOT a true Brownian motion. It is too regular, and most importantly, the error seems to be bounded. The (apparently) bounded error, together with the non-dependence on the initial conditions (yet to be verified) for the probabilistic behavior, makes me think that this problem, after all, might be solvable. And maybe another way to sole this problem is to get good enough asymptotic expansions for $y_n$ and $z_n$.

Note

The recursion mentioned here is identical to the one featured in section 2.1 in this article after the change of variable $z_n = 4x_n + 1$. An additional change of variables, $w_n=z_n - 2y_n$, could prove useful.

A different approach

If the goal is to prove that that binary digits of $\sqrt{2}$ are uniformly distributed, a different approach is as follows. Consider the sequence $q_k=2^{-\{ k \log_2 3 \}}$ where the brackets represent the fractional part function. The number $q_k$ is rational, it has a period of $2 \cdot 3^{k-1}$ in its binary expansion, and the proportion of digits equal to zero is always 50%. The median of $\{q_1, q_2, \cdots q_n\}$ tends to $\sqrt{2}/2$ and if $n$ is odd, it is equal to one of the $q_k (1\leq k\leq n$): it is the middle value when these numbers are ordered. Thus the proportion of zero in the median is always exactly 50% if $n$ is odd. But is this also true at the limit as $n\rightarrow \infty$? Not necessarily, this is not the case if you consider the minimum or the maximum, instead of the median. So it is more complicated: some $q_k$ (infinitely many) must be removed to guarantee this fact, and they must be chosen carefully so as to not change the value of the limiting median.

One way to do this successfully is as follows. Keep only those $q_k$ that satisfy $|p_m(q_k) - \frac{1}{2}| < \frac{C}{\log m}$ for all $m=2,3, \cdots, \lfloor \log k\rfloor$ where $p_m(\alpha)$ is the proportion of digits of $\alpha$ that are equal to 1 among the first $m$ digits, and $C$ is a constant. Would this eventually eliminate $\sqrt{2}/2$? Probably not. Would this impact the limiting value of the median? Probably not. But these are extremely challenging questions. It is probably not hard to compute the exact proportion of $q_k$ that you eliminated by doing so. Yet it is not impossible that $\sqrt{2}/2$ can't be reached anymore after applying this thinning process, but only a neighboring irrational that shares (say) the same first $10^{10,000}$ digits.

Update: If the $q_k$ being removed were evenly spread (they may not), we are left with the same limit distribution (that of $2^{-X}$ where $X$ is uniform on $[0, 1]$). Thus its median $\sqrt{2}/2$ would stay the same. Or perhaps, after removing the set $S$ all those $q_k$, the median $M_n$ computed on $\{q_1,\cdots,q_n\} \setminus S$ still satisfies $|M_n - \frac{\sqrt{2}}{2}|<\frac{D}{\log n}$, where $D$ is a constant. That would be enough to prove the main result.

If you are only interested in finding a classic math constant $\alpha$ with 50% zeros and 50% ones in its binary expansion, then replace the median $M_n$ by the closest number to $\alpha$ among $\{ q_1, \cdots, q_n\}$. An example is $\alpha = \frac{1}{2 \log 2}$. This number is the expectation of the distribution in question, instead of the median. One interesting fact related to this number is the following. The $m$-th digit of $q_k$ averaged over all $k=1,2,\cdots$ is denoted as $\mu_m$. Its exact value is known and discussed in a previous MST question (here). For $m>1$, the sequence $\mu_m$ is strictly increasing and converges exponentially fast to $\frac{1}{2}$. An immediate consequence of the definition of $\mu_m$ is that $$\sum_{m=1}^\infty \frac{\mu_m}{2^m} = \frac{1}{2\log 2}.$$

Also, less obvious to prove but not difficult: we have $\mu_1=1$ and for $m>1$, we have:

$$\mu_m = \frac{1}{\log 2}\cdot\log \frac{2^{2^{m-1}} \cdot (2^{m-1})!^3 }{(2^{m-2})!^2 \cdot (2^m)!} .$$

More on this in my next article.

Conclusions and next steps

It looks like $\sqrt{n}\cdot\Big(L(n) - 1\Big) = O(1)$. This is enough to prove the main result in this discussion, but this asymptotic relationship has yet to be proved (or disproved). A weaker result, possibly easier to prove, is the following: $(\log n) \cdot \Big(L(n) - 1\Big) = o(1)$. This is also enough to prove the main result. See chart below illustrating this conjecture.

Another approach discussed in the section A different approach, does not seem to be easier.

A related question is whether or not, for two irrational numbers $\alpha, \beta$ linearly independent on the set of rational numbers, the correlation between the digits of $\alpha$ and $\beta$ in base $b$ is zero. To prove this, it suffices to prove that the sequences $\{ b^n\alpha\}$ and $\{ b^n\beta\}$ are not correlated. It was proved that this was true for the sequences $\{ n\alpha\}$ and $\{ n\beta\}$, see here. Unfortunately, this does not help. Here the brackets represent the fractional part function. But this epitomizes the issue that we are dealing with here. If $\alpha$ is irrational, then $\{n\alpha\}$ (sometimes called $n \alpha \mbox{ modulo } 1$) is equidistributed, regardless of $\alpha$ (irrational). But $\{b^n\alpha\}$ is equidistributed only for almost all irrational numbers. The first sequence is sometimes referred to as a universally good averaging sequence, while the latter is termed a universally bad averaging sequence: see this Wikipedia entry.

A weaker conjecture is the following: infinitely many irrational numbers $\sqrt{r}$ with $r$ a rational number, have 50% zeros and 50% ones in their binary expansion. You might be able to prove it without being able to name a single of these numbers satisfying this property (I tried and failed so far; it is probably still a mystery today.)

Finally, if you are interested to see how chaotic the behavior of non-normal numbers is (contrasted with supposedly normal numbers such as $\sqrt{2}$), read my recent MSE discussion, here. It gives some nice insights about what makes a number such as $\sqrt{2}, \pi, e$ or $\log 2$ stand out.

Update

The relation $\sqrt{n}\cdot\Big(L(n) - 1\Big) = O(1)$ is a direct consequence of the Berry-Essen theorem, a refinement of the central limit theorem that applies in this case. It would be true here if the digits of (say) $\sqrt{2}$ were i.i.d. with a Bernouilli distribution of parameter $p=\frac{1}{2}$, as they appear to be. Thus proving this asymptotic result for $\sqrt{2}$ would be very difficult at best, impossible at worst. But a weaker result might be reachable. Along the same lines, see a new question (with answer) that I posted recently on MSE, here, and also here. It shows what would happen if $\sqrt{2}$ was a well-behaved but non-normal number, say with 75% of its digits equal to $1$.

Update #2

I wrote an article based on this question and some other related questions, You can read here.